I am a Research Scientist at Adobe Research in Basel, Switzerland, working in the AI Experiences Lab. I received my PhD from MIT, where I worked in the Computer Science & Artificial Intelligence Laboratory (CSAIL).

Designing a World AI Can Understand: Grounded Intelligence Across Digital and Physical Realms

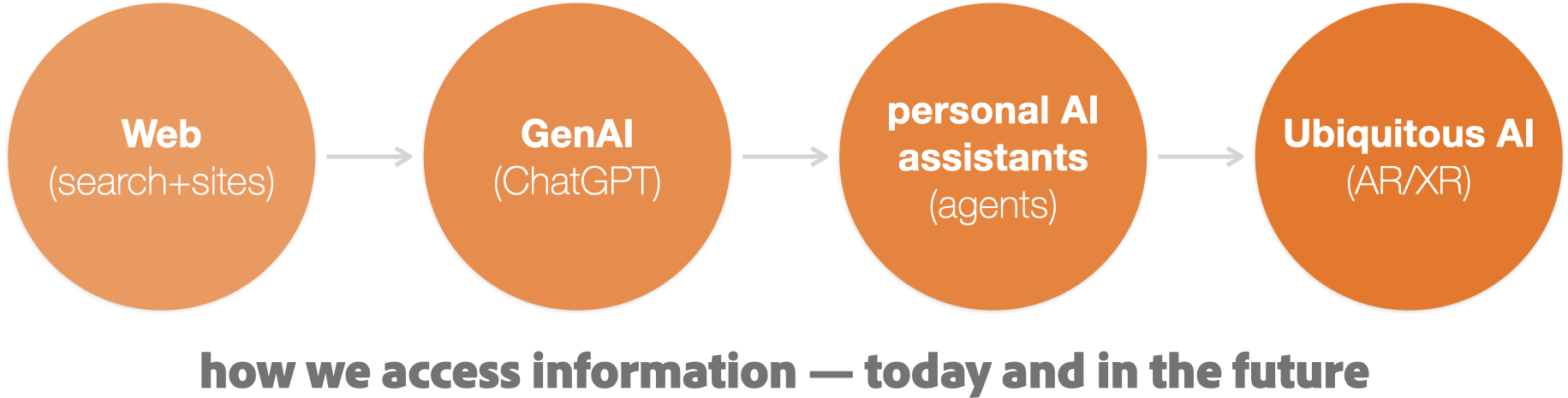

My research is driven by a fundamental shift in how users access information: We are moving beyond websites and search engines toward LLMs, personal AI agents, and spatial computing platforms. In this new paradigm, AI systems become the primary interface to knowledge. How can these emerging interfaces deliver reliable, context-aware information anywhere, at any moment?

I investigate this question through the lens of grounded ubiquitous intelligence: designing systems, objects, and infrastructures that make the world legible to AI.

Email: doga [at] {adobe.com, mit.edu, csail.mit.edu, acm.org, ieee.org}

At Adobe, I design systems that help organizations and creators adapt to an AI-first information ecosystem. This includes the Adobe LLM Optimizer and Sites Optimizer, which support content quality and discoverability in LLM-dominated environments, i.e., for the emerging field of generative engine optimization (GEO). I also develop interfaces for agentic AI, such as Adobe’s Project Face Off [🏆Adobe Summit’26 Best Sneak award] and Project Get Savvy, and explore multimodal, contextual AI+AR interactions, e.g., augmented object intelligence.

During my PhD at MIT CSAIL, I developed new ways for physical objects to carry unobtrusive metadata that AI systems can interpret with high reliability. This line of work — ubiquitous metadata — includes systems such as G-ID, InfraredTags, BrightMarker, and Imprinto, which embed machine-readable information directly into materials. This allows AI and AR systems to perceive objects with guarantees that vision-only algorithms cannot achieve.